Understanding the ML in business

Any project involving ML or AI can be divided roughly into 6 phases. These are:

- ML Problem Definition. During this stage, we state the problem and check what resources may be required to solve it.

- Dataset Selection. Correct data is necessary for any ML model to be efficient. Therefore, a separate stage is devoted to selecting the correct data.

- Data Preparation is a stage that we will review in this article. It is necessary to check and prepare any data before training a model on it.

- ML Model Design. At this stage, we build the appropriate model which will best suit the problem and the data available, conduct feature selection, and set the algorithm.

- Model Training. This is the stage where we train, evaluate, and improve the model, trying to find the suitable parameters that show the best performance.

- Operationalize in Production. Now, we observe and tune the already deployed model into the working environment. Then, we estimate its influence on the situation while measuring its success.

Although data preparation is often a very routine process, it is arguably the most critical stage in making the whole model work great or ruining its performance. But the right professional company with data and analytics services will help you overcome all the challenges!

Most data science challenges occur during the data preparation stage, especially in big data machine learning projects. While entire ML project development is considered a sequential process, it is often cyclic in the real world. You move from data preparation to model tweaking and back to achieve the desired performance. The data preparation phase is one of the most significant challenges of big data analytics due to the amount of data in question.

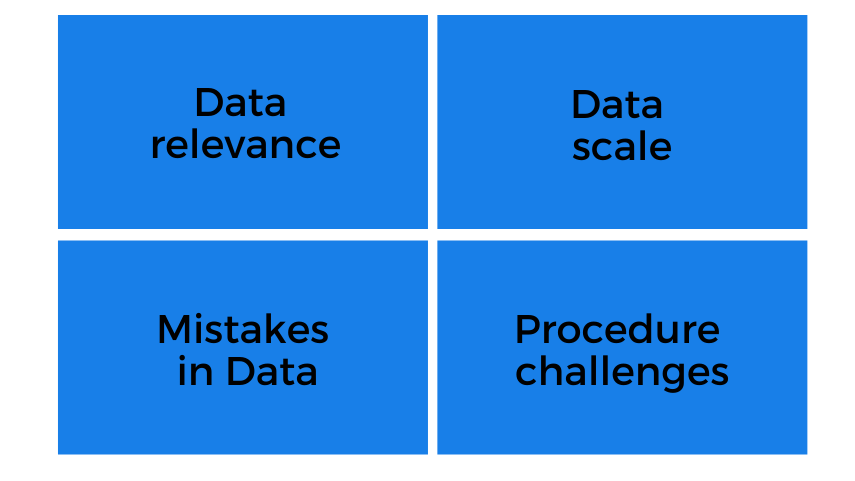

This article will look at top challenges that follow almost every ML project on its way to success. These are pretty diverse but can be divided into four big groups:

Top challenges during Data Preparation stage of Machine Learning project

Top challenges during Data Preparation stage of Machine Learning project

I) Data Relevance

The first group of challenges is linked to the source of data. Having appropriate data is crucial to describing the problem in detail sufficient for the project. One of the critical challenges in big data analytics is to take the correct subset that describes the problem in question. Often, data relevance issues may even require the replacement of the dataset.

1. Biased Data

There are many cases where having biased data may lead to unsolvable problems. Consider, for instance, a dataset containing preferences of male and female customers. Having unequal numbers of entries will make most ML models biased. This can cause models, for example, to prefer one group over another. This issue becomes more significant when the quality of a particular subset changes. In our case with male and female preferences, having records of either group to be corrupt (missing information, mistakes, etc) may pose a severe threat to the whole project. Also, sometimes using features like sex/gender may pose a significant threat, as any model considering such features may potentially become biased towards people of a certain gender, race, etc. Incorporating the latest AI achievements can help mitigate such biases and enhance the overall robustness of the analysis.

2. Seasonal Effect in Data

This issue is far less dangerous. Usually, it doesn’t require a dataset replacement. Yet, it has to be considered before being used for training purposes. There are many data examples with apparent seasonal effects. For example, customer behavior or financial data usually have trends in them. This challenge requires careful subset selection or implementation of the detrending procedure, which excludes values causing seasonal effects from the dataset.

3. Data Changes Over Time

Usually, observations change over time. For instance, consumer behavior records from a previous decade are not helpful today. On the other hand, historical data can often create more good than bad as it describes processes that are not similar to modern-day ones. This property of data may require dataset replacement. Integrating techniques from ML operationalization can help manage and adapt to the dynamic nature of data over time.

4. Relevance of the Train/Test Subsets

Like with biased training data, using test data that only considers part of all possible events/features can yield terrible results. ML models trained on biased datasets that are statistically incorrect will most probably result in the model being introduced with an error. Therefore, having complete and unbiased test/train data is crucial. One possible way of avoiding such issues is to use multiple different test sets or to select one from the primary dataset as a subsample containing the same information/features in a smaller amount. Ensuring the representativeness of data during the training phase in machine learning is essential for the model’s generalization and performance.

II) Mistakes in the Data

There is a whole class of different issues that may appear in a dataset. For example, fixing wrong formats is one of the most critical data science challenges. There are many methods of dealing with such problems. However, only a considerable amount of such mistakes can lead to a dataset replacement.

5. Outliers

Outliers in data are certain records that significantly differ from the general distribution. These values can represent mistakenly recorded observations on some infrequent actual event or natural novelties in data. Either way, outliers are not beneficial to the ML model in general. Some ML (Random Forest vs Linear Regression) algorithms can ignore outliers or almost do not take them into account. However, most ML algorithms’ accuracy can be significantly decreased by outliers. Therefore, most ML applications exclude outliers, which can be hard to detect.

6. Duplicates

While removing duplicate records is not an unprecedented challenge, not doing this can be a critical mistake. Duplicate values can accentuate particular observations, making ML algorithms work better for repeated cases in the training dataset. However, having duplicate records of train data in the Test subset can also become confusing for those who validate ML models. Therefore, duplicate values have to be excluded from the data.

7. Missing Values

Missing values can significantly reduce the future models’ results unless they are somehow fixed. Depending upon the algorithm, missing data is restored in multiple ways: removing records with missing data, replacing missing values, or applying some special algorithm (replacing with mean/mode/zeroes, for example) to fix those. This step is crucial and requires additional analysis before any actions are taken. For instance, it is vital to know how many values are missing or if missing features are correlated with something else (say, if missing data occurs only in some kind of special event). These aspects may change the final decision, which can in turn affect model performance.

8. Noise in Data

“Noise” is the word commonly used to describe numeric values. However, it is possible to consider it when describing text or any other data. There are many different types of noise (for instance, static and dynamic noise). Still, all of them provide no valuable information to the model and complicate the preprocessing stage. Noise filtering is a complex and risky process. It requires proper knowledge and experience. By applying denoising techniques, it is also possible to remove valuable data.

III) Data Scale

Having lots of data is more beneficial than not having enough of it. For instance, when more data is needed, it can be easy to remove outliers/missing variables or simply use a sample for training. Quite often, though, a small amount of data can become an issue even during the preprocessing stage. Therefore, selecting the right amount of data and not making the model overfit is one of the main challenges of data analytics.

9. Amounts of Data

Having a massive dataset usually requires some special data storage techniques or cloud computing to process it. However, huge subsets can create a complicated development infrastructure and increase training time. Processing vast amounts of data or records can also take ages. When there is a lot more data than can be handled, careful sample selection or even resampling can significantly improve the model’s results.

10. Randomized Data Subsets

Applying proper data split techniques is extremely important when it comes to training and testing models. Quite often, engineers forget and use a sequential piece of data. Such pieces only describe a particular period or group. Such use of data leads to the degraded performance of any machine learning model. When randomizing test/train subsets, this issue can be avoided. It is better to double-check, though.

11. Data Representation

Often, there are multiple ways data can be represented or stored. For example, consider Wide and Long data formats. Both have their benefits and drawbacks. Selecting the appropriate one can significantly improve performance during the training process. One great solution that covers possible confusion is the tidy data principle. However, there is still no single approach.

12. Data Matching

Quite often, data matching describes the simple removal of duplicates. However, it is a severe challenge when it comes to comparing multiple datasets from multiple sources/in multiple formats. The data format can vary depending upon the source, so simple duplicate removal turns out to also be a standardization process. Consequently, similar records do not always have to be identical. Determining the comparison metric or proper data standards, etc., is a complex and lengthy process. Matching multiple huge datasets is one of the main challenges of big data analysis.

IV) Data Preparation Procedure

Most issues during the data preparation stage are related to the data itself. However, some severe challenges exist here simply because of the preparation process. Launching a preparation procedure into production is not an easy task. On itself, establishing a functioning pipeline can be a significant challenge of big data analysis..

13. Including New Data Into Processes

Many projects, including those based around Machine Learning, are not fixed in time. Quite often, models are continuously trained or tested. Also, with data changing its form over time, new preparation techniques need to be developed. At times adjusting the processing pipeline for continuous use may require significant effort. Therefore, it is necessary to keep your model fit on new data, constantly checking its performance (and performance change over time) and building some kind of continuous training pipeline.

14. Automated ETL/ELT processes

Sometimes the data needed for the model to work first needs to be collected and standardized. Consider some web crawling examples where you have new records being continuously added to the dataset. First, the extraction phase becomes quite complicated. Second, the transformation of the continuous data can be a real challenge. Third, it can be impossible to assess the distribution of a particular value correctly. And, again, feeding such input to fit the model can be a tricky task. Whenever there is an element of continuous or repeated use of data, the data preparation technique becomes an integral part of the whole project, requiring special deployment, fast calculations, optimized computations, etc. Bu if you want to implement data analytics services, then visit our website for more!

Conclusion

Many machine learning development company problems are common, if not trivial, and well-documented. However, many challenges in the processing stage require an individual approach. Many issues can be solved without too much sweat, while some can require hard manual work. Data preparation stage can account for many challenges of data analysis.

Most issues in the data preparation stage have many solutions. A hefty amount of theoretical knowledge is required to distinguish the best possible from just good approaches. It is a common belief that data processing can take up as much as 80% of the ML project development cycle. With no real automated processing tool in sight, there is no reason not to believe it.